I am currently not available but feel free to get in touch ! contact me

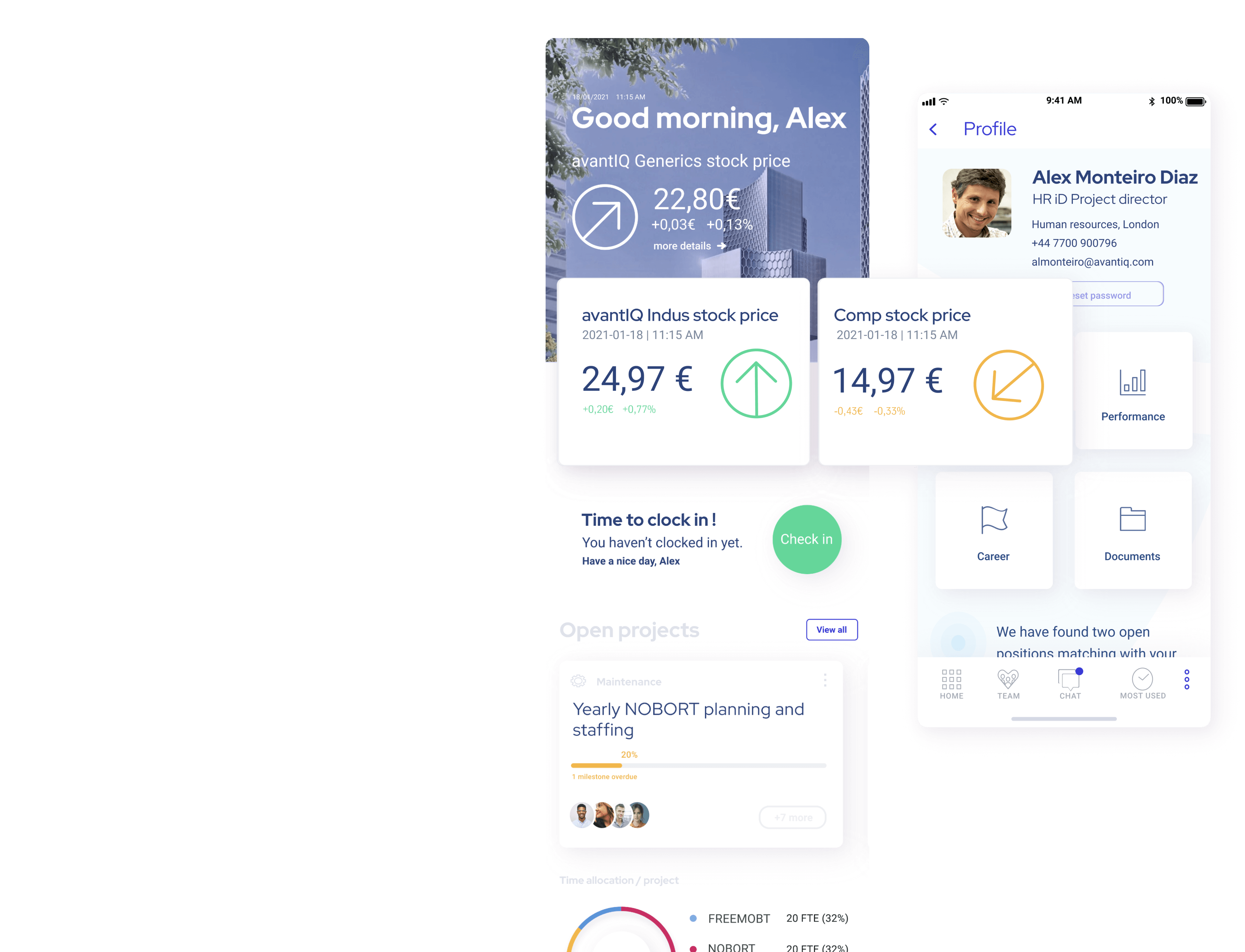

10m Global HR App Program

Client

NDA

Years

2019 - 2021

Achievements

Led local projects requirements research

Established a rapid iteration review cycle

Led Service Provider Design team

Led QA activities

Scope

50 countries; +100k daily users

Overview

Important notice : I have been working under non-disclosure agreement for this project. Due to the fact that this is an internal enterprise application, I am not allowed to communicate my client's name or project. I was allowed to publish designs but with a different name and no mention to client. My client was a large multinational pharmaceutical group. I was hired to help the intern team create a global corporate HR application to be used in more than 50 countries. The application had to be a balance between harmonized standard processes, UI and local requirements. My client needed a profile matching the cross-cultural and design operations complexity requirements.

This project has been a milestone in my career.

As an UX generalist, I had the opportunity to be involved in research, design operations, QA and monitoring of the product. We are talking about enterprise software so it was very clear to me that the people who use the software every day only care about one thing: getting stuff done effectively. And if they can’t do that, fewer people want to use it, until eventually no one uses it anymore. Same happens if the application is not aligned with the local corporate and personal cultural backgrounds. The KPIs setup by the project governance were clear, we needed users to engage with this application. I strongly advocated for prioritizing empathy for end users and integrating user-centered design into most stages of development... One more key reason was that we contractually needed local User Acceptance Testing sign-off to be in position to move the product to production. The scope of this project is so huge I had to review the content of this case study four times before being happy with the result. This project also include valuable inputs around Change Management and Knowledge Management but I will keep these for a dedicated article. Don't hesitate to reach out if you are kicking off a similar project and need some tips around change management with local teams.

Achievements

Measurable Impacts

Delivered 20+ local projects design and QA on schedule

Cut by 50% iterations rates by the end of the project

App adopted by 70% of the workforce

Supervised service providers design delivery (10 designers / frontends team)

Team Leadership & Development

Successfully led and mentored research, design and QA teams

Implemented team rituals and processes for consistent growth

Reduced negative attrition through proactive leadership

Strategic Impact

Established and improved design QA processes

Ensured reflection of company design system into App

Created rapid iteration review cycles with key stakeholders

Drove business metrics and revenue impact through design initiatives

Project Leadership Highlights

Supervised service provider team of 10 UX designers, front-end and QA. Led local researcher SME teams.

Established stakeholder review cycles

Negotiated architectural improvements with Engineering

Delivered 20+ complex local projects on schedule

Project areas

- Research/ethnographic

- Synthesis and ideation

- Information architecture

- Design Ops

- HTML Prototyping & design

- Cross cultural UI strategy

- Corporate App metrics

- Cross cultural UI

- Knowledge management

- Design project management

- Change management

- Formal usability testing

- Worldwide distributed teams

How to setup an inclusive, user-centerred design process for a global enterprise app targetting +50 countries ?

Above : Scope of the project. Designing for different countries requires to setup a global model and adjust it to local requirements.

Defining a standard approach mixing global standardization and local adaptability

As this project had been kicked off by the headquarter of the company, initial inputs and template have been defined there as well. The program global HQ team had been working for 1,5 year on an application template skeleton flexible enough to take into account the upcoming change requests from the local companies. The design of the template followed a standard development project, all in English. In the meantime local research were kicked off so we already fuelled the global application iterations with some local high-level considerations. When some local requirements were popping-up from different locations in the world, my role was to provide a visual prototype of the requirement or the feature to the program team. These local requirements were sometimes integrated as global standard and offered to other countries if they provided global benefits. To make this work, it's important to understand the basic assumptions about your product in the target countries first and take cross-cultural scalability into account as early as possible. We categorized and listed the countries to be integrated first and I could not wait to fly there, meet our local end-users and start the research !

This project made use of a template approach to ensure global standardization and local adaptability.

Technical Approach (Single App with variations)

It was important to understand the technical foundation of this project. A single app binary for all countries, with country-specific features controlled within the app. Local application download page were setup for each country and feature flags/conditional logic were implemented to enable/disable features based on the detected country. Some controls such as Device locale settings or Geolocation kepts insuring the correct versions were served. A large amount of the program had been invested in implementing a robust testing strategy to cover all country variations.

Running Research/ethnographic study

The biggest reservation that stakeholders usually have about research and user experience metholodgies is that it takes too long and costs too much. My first job was to show stakeholders that we would need more time allocation but will still be able to get enormous value from getting local inputs as early as possible. I also emphasized the impact on development time and necessary iterations. Bringing local inputs in our projects conceptualization would help us to effectively design for these multicultural audiences. We have been working on integrating research and make it work alongside a rapid development agile cycle. We have been using simple techniques to get the core template model correct so we could test it with locals once the initial versions have been built. Now that we had found a way to effectively integrate local inputs in our development process, it was time to surround ourselves with the culture we are designing for!

1. Elicitation techniques

Designing a new product or to expand it to a new market is not about assumptions but facts: focus on your users first. We needed to understand how they run standard HR processes and what would make them use the application or not. A combination of several different techniques has been necessary to achieve a successful outcome.

Step #1 : Study your market and document your assumptions

I started by documenting information around the country or group of countries culture, history, political situation and legal environment.

Step #2 : Talk to the end users

Then, depending on the country, we had a assigned researcher from the global team or a local SME that would provide us information about their requirements. For some countries I took the responsibility to do some on-site research. I noticed that 3 days on-site travel was enough to create a connection and get information without bothering users on their daily routine. They knew a project was going on and were mainly happy to be part of it. I always started by spending a day in their office, observing and taking notes on how they worked and used technology for specific processes. I have also been using questionnaires, focus-group and interviews for the next 2 days.

Step #3 : Validating the information and testing the global template

Before we kicked off testing on the global application in order to list local deviations and requirements, I shared my assumptions with both users and stakeholders. This way I was able to validate them and show local team we really care about their culture and the way they work. Global HQ would already screen some requirements requests. I then picked-up the pre-validated ones and started to work on the specs and prototype. Next step was meeting again with local team in order to gather insights by holding review sessions but kept them under control and stay focused on observing workflows for a specific tasks. Working early with a prototype will drastically reduce costly dev iterations.

Step #4 : Integration of local requirements into global template or local addition

The outputs from the validation sessions were then synthesized and shared with global project teams, then requirements were accepted or rejected and listed as agile epic/stories for this country.

Process

I believe that transparent, open design process leads to happier clients and shorter development time. In this case, local team would be signing-off the final acceptance for their project so it made sense to involve them as much as possible. As we were working on the validated prototype to be handed-over to dev team we decided to empower local team by letting them access Figma. They could comment or request more information on specific point through the slack channel we opened to communicate with them.

Cross cultural UI overall approach

The original approach was to try to get all the application for a country localized and translated. We quickly realized that we needed local team to be pro-active in this process. We needed to have a native cultural expert with enough coporate culture to be able to let us know what needed to be localized and what not. Overall, if we could not get enough information we aimed to make localize the UI as much as possible and keep the content in english.

Our solution was a combination of localized UI & corporate content in English

I noticed that most countries used some corporate-specific words that did not needed to be translated. Some UI elements did not need translation either. I have made an inventory of these and included them as standard values in our global template. Overall, what we aimed to do was to make sure translations were accurate. If we could not find any cultural expert in our local team, we kept the UI standard (english) as much a possible.

Process example: United Arab Emirates app localization

Preparation : market study, elicitation and first round of required iterations

The first step has been for us to consider local legislation and collect information sample that represents the target audience and list our questions so we could be prepared for our first round of meetings and workshops.

We then travelled to the country company location, presented the big pictures and run our elicitation techniques (1 day observation + 2 days of interviews and focus-group). We would also ensure that we had a person who could function as a “cultural expert,” equally familiar with the target personal and corporate culture. His first task was to make sure the project technical terms and abstract concepts could be translated and understood. In the meantime, we tried to have everyone on the design team develop a clear understanding of the target culture, corporate flows, and processes variations. After this workshop we kicked off the wireframes/prototyping phase in Figma, involving local team on regular progress touchpoints.

Scope validation and design kick-off

We then shared our testing results and highlighted what would be the requirements and variations. To do so, we travaled again to local users location and brought our Figma screens to a live prototype so user could validate the scope using a tangible product. Cost evaluation, planning and subsequent tasks were then created in Jira.

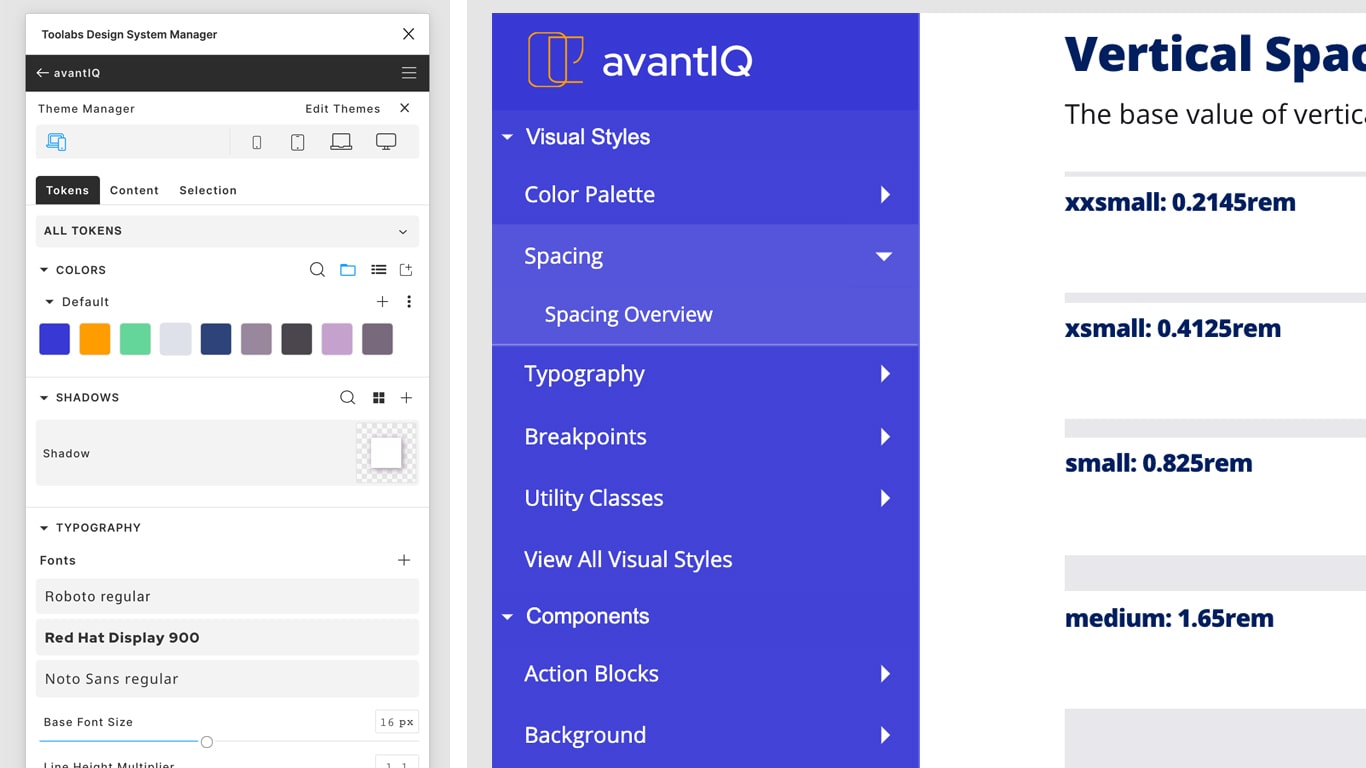

Keeping design consistent

Before kicking off local project we already had a global template and a standard application to be presented to locals. This standard application was build on a master design system (tokens) having smart, reusable patterns at our disposal. It was all about access point centralization, reusable design resources and local adjustment, reusable development resources. Most of the design system fails as component are just used once but in our case most of the components would actually be re-used ! In our case, the design system was more a starter kits, components and documentation. Things that people could actually understand and use. The company had already a brand guidelines, master iconography, symbols and pictures and a style guide. All we had to do was to create and adapt our component leaving room for local inputs and variation.

Our unified approach included design tokens for wireframing and prototyping (left) and a complete design system for development (right)

We have run UI localization for most of the countries in Asia, South America and Europe. Arabic localization has been a long and hard process. I wrongly thought at first that mirroring the English UI would be enough. It won’t just do the job either to hire an interpreter to translate text into Arabic and consider the job done. Without the support of our local cultural-expert we would not have been able to achieve this local project.

United Arab Emirates localization example

First difficulty : all arab countries use different dialect. After discussing this with locals we realized it was not a big issue for them to deal with a standard arabic UI. We decided to use Modern Standard Arabic (Fusha) so we would save a huge amount of time on translation process since most of the arab countries in scope would be able to use the app. Our initial research show that Arabic user can easily switch between English and Arabic as long as the design is consistent. We were therefore in position to implement our overall UI approach for these countries (localized UI / corporate content in English).

In terms of Design, we involved local team in regular validation touchpoints. Arabic words are longer and require a bigger size and space than used in the Roman alphabet due to the complexity of its characters. Digits are Left-To-Right even if the UI is Right-To-Left. This implied some iteration and many deviations from the standard UI. We also had to change our icon set as some of them would keep their LTR alignment. Sometimes we just changed the location in the UI, keeping the original alignment and icon directions.

Considering all the changes that the characters and words implied in terms of size, layout and copy we ended up creating a specific sub design system for Arabic UI. We used Noto font for the application for all countries so we made sure we did not get any TOFU.

Design project management

Keeping design decisions documented

Every design deviation from the standard template was reflected in the design system and documented. This helped us to keep the rational and context behind design decisions documented for future changes and also provided clear, written indications to the dev team. We also used design documentation when we required decisions to be taken from the governance team.

Clear phases closure

We kept the process transparent with locals but we also made clear that we had to meet deadlines and phases. Making closure of each steps signed-off helped us to move on and avoid endless design iteration rounds. We had clear criteria for each step and they had been presented to the team during our first kick-off meetings.

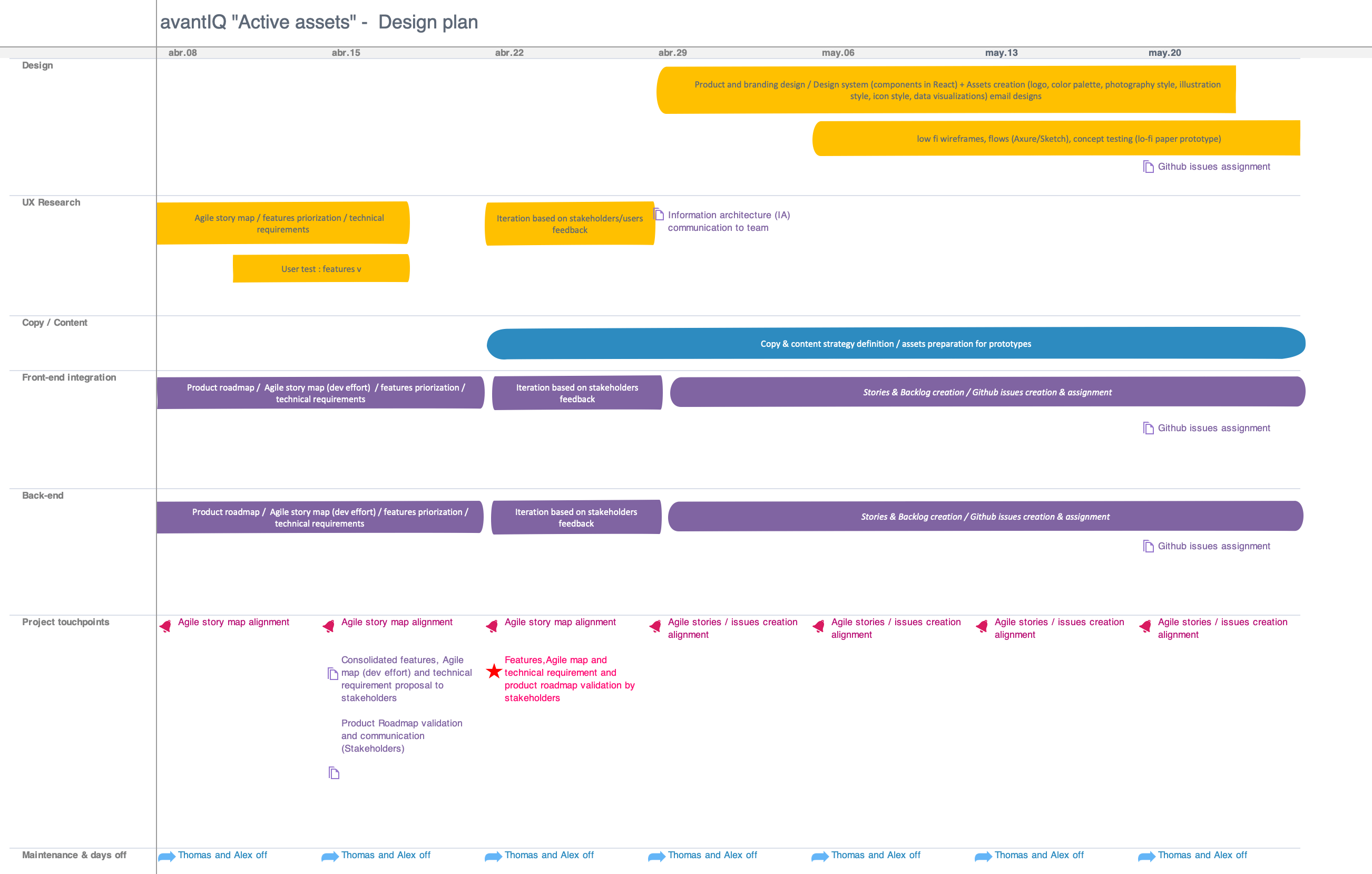

Providing team high-level progress and milestone

When working remotely on such a huge project, it's important to keep the team aligned and provide ways for all to understand where we stand and where we are going. We needed to cooperate with several remote teams based in different time zone. This made our communication process harder, so we had to make sure we worked as closely as possible. I tried to always over communicate and provide continuous feedback on the project being as transparent and open as possible when conflicts or misunderstanding popped up. One regular, fixed meeting was enough for us to align. I tried to find volunteers to be our local facilitator and minute taker for every single meeting so we could keep track of progress from one week to another. The rest of the time we had "informal" catch-up and our slack channels to communicate on daily progress or issues we faced.

Alongside the Jira tickets I kept updated and shared an high-level design project plan on a page

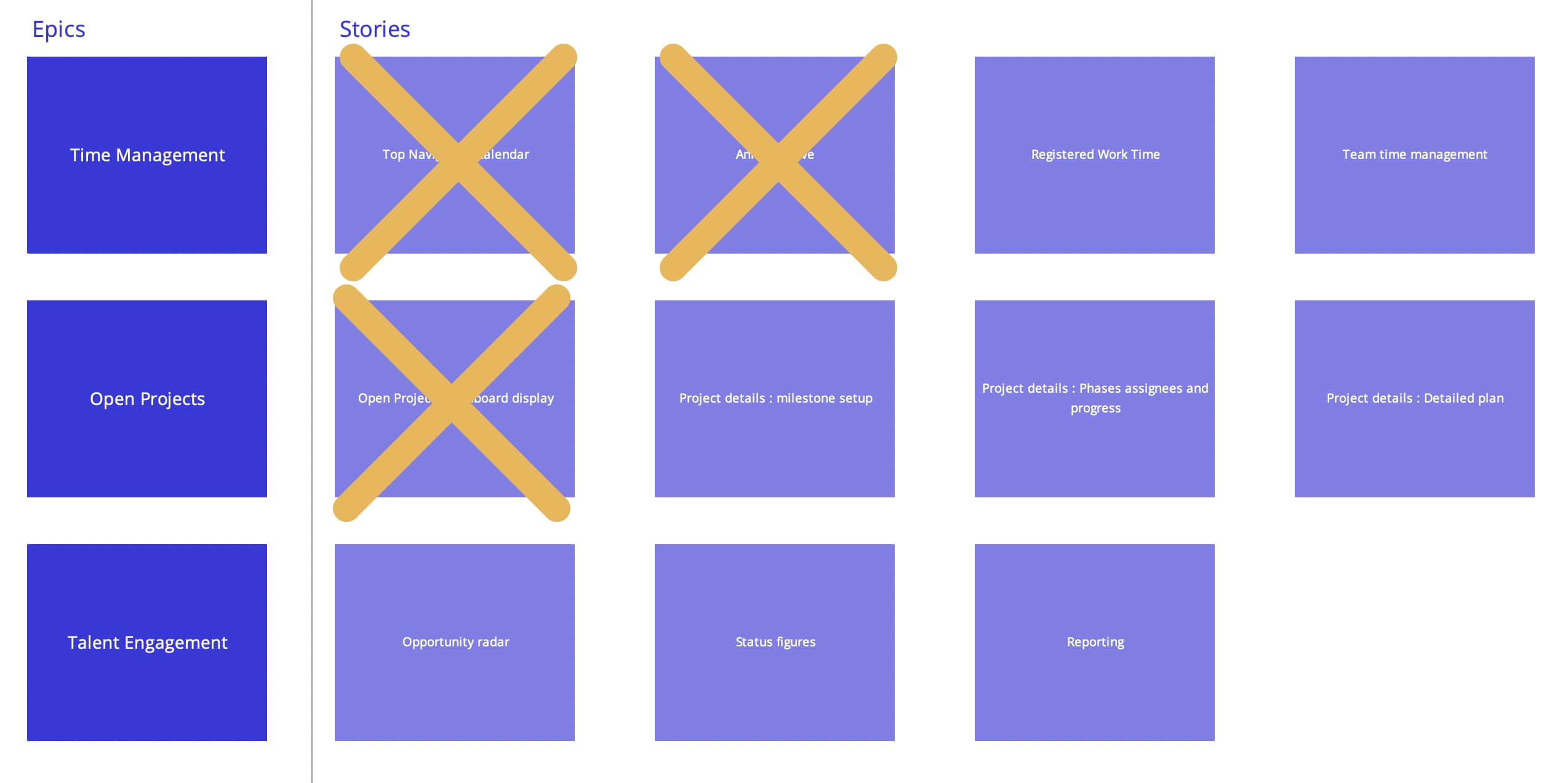

Epic and stories visual checklist help our team plan the workload and provided a sense of tangible accomplishment in such a large project

Acceptance, deployment and fine-tunning

Local team has been deeply involved in the design phase so we did not face critical issues when running the acceptance tests. We did listed a couple of defects as usual but both design and local teams were happy with the result. I can not underline strongly enough that involving locals and being transparent from the very early stage of the process will ease your project day-to-day, change management and final acceptance. We closed this local project presenting the future iterations and releases. We kept our local cultural-expert in the global project information loop and sometimes reached out for validation when dealling with some standard changes in the UI.

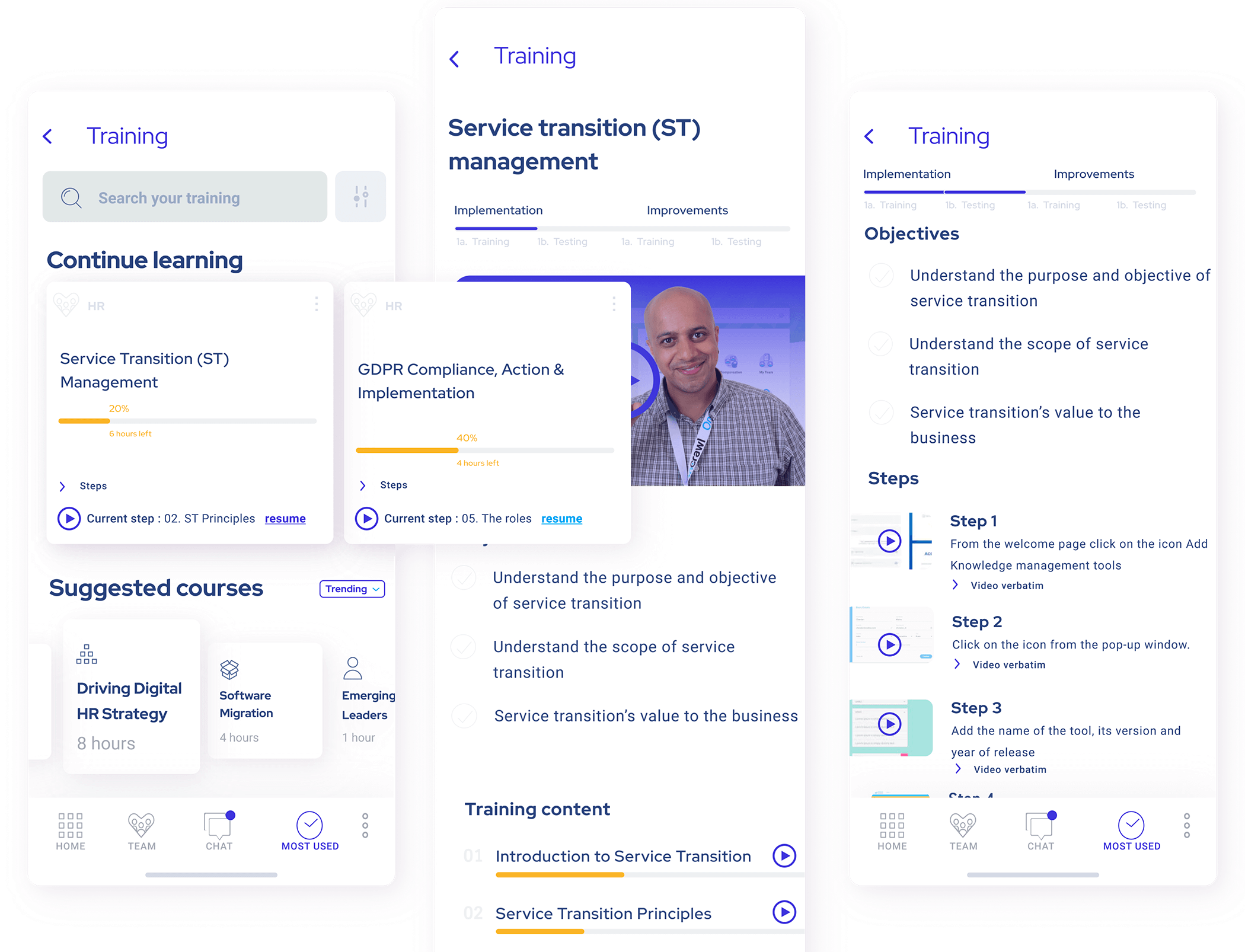

Knowledge management : one goal of this project was to empower local users' elearning practices. We aimed to convert instructions into visual experiences.

UI Testing & QA

When it comes to testing the front end, there are a few different areas that we wanted to cover:

- Unit Testing

- Integration Testing

- Accessibility Testing

- Performance Testing

- Visual Regression Testing

- Browser/device testing

- User Acceptance Testing

Overall process

When a pull request is created, continuous integration tools start to run against the code in the branch. It’s during this step in the workflow that we run our automated tests, and if any of the automated tests fail, we block the branch from being able to be merged into the master branch.

Unit testing

We used Chai and Jest JavaScript testing tool for unit testing. We wanted to check if components are still working in isolation and if the version of the app in a new language did not impact the existing languages.

Integration testing

We used Jest for integration testing. We wanted to make sure all components interactions worked flowlessly.

Accessibility testing

When deploying a new country app deviation we run Axe automated testing runs as part of the development build to let us know what changes are required in terms of accessibility. We specified a couple of individual rules as mandatory for the app to be deployed to end users. These rules applied to all the countries in scope.

Performance testing

Some countries had a very limited broadband internet connection. We analysed the impact of images in loading time and tried to find design alternative for data-heavy pages.

Visual regression testing

Since we were constantly updating and pushing new code, we wanted to ensure that no visual defects were making their way into our apps. We included visual regression tests during continuous integration testing, and stipulated that any detected visual changes must be explicitly approved before the branch can be merged. Since we had all the design decision documented, it was easier for dev team to check and merge the branch. Since we were using React, we decided to use Chromatic set of side-by-side screenshots showing a before-and-after view with any differences noted for visual regression testing.

Browser/device for the web app

We have been running local testing with the good ol' Browserstack when it comes to browser and devices testing. Comprehensive tests were run or a major realease or a key component implementation. These tests included both desktop and mobile browsers.

User Acceptance Testing

As the global lead, I was responsible for conducting formal User Acceptance Testing sessions and ensuring that local teams approved the final product. Acceptance criteria in terms of defect severity were global, but approved local requirements needed to be reflected and working. Local countries had to provide us with their final validation so this testing was a clear project milestone we carefully prepared. That meant preparing all the test scripts, collaborate with local teams to organize logistics, develop a local test plan (outlining the scope, objectives, test cases, and timelines based on local requirements - ensuring the plan aligns with both global and local goals), prepare test data, facilitate feedback, capture defects, run daily syncs, address defect (I did my best to tackle defects directly on-site, sometimes directly in the code, when possible), run retesting, track results, negotiate with locals requirement that would not be ready on time, prepare a final report, and... Obtain local sign-off ! We usually travel on-site with 1 or 2 developers and allocated 2/3 full days testing all the processes.

Feedback and iterations

After a country had been using the application for more than 6 months we started to collect data and planned on-site session for a feedback round. The question we needed to address was basically : are people really using our product? The reason is that we the product team can use it to figure how to really help, add value, and benefit the people using our product. We calculated the adoption rate using the following formula : Adoption rate = number of 30-day active users / total number of users in scope. Luckily in most countries people actually used the application. For those reporting bad figures in terms of adoption, before planning any session we tried to understand if there were any external issue preventing people to use the app (change in mobile phone assets, company IT policies...). The metrics we setup for this project were the following : Time on task (more complicated to measure than it seems!), Succes rate, Error rate, Degree of enjoyment, Time to 1st task and Abandonment rate. Before contacting the countries we also made sure their defect backlog would be empty without any issue unaddressed. Once we were confident with the data we planned 1 day to catch-up with local team to run our metrics testing and make sure nothing has been left behind. This feedback session was the official closure of the project in terms of meetings and tasks. We usually nominated a limited group of user we would contact on a regular basis for short follow-up interviews.

Final product and lessons learned

As I mentioned in the first part of this case study, this project has been a milestone in my career. It allowed me to be involved in all phases and dimensions, from research to delivery and measurement. The complexity and pace of the project shifted my mindset, making me more humble and, I would say, wiser. Traveling to meet people from different cultures to gather local input also made me a better professional. It helped me realize that user research and localization are about being human and empathetic, without preconceptions. I noticed that beign transparent about the design process and honest about what will be possible and what will not early in the process really helped gaining engagement from locals. It's all about showing that you DO care. If I had to summarize some key things to keep in mind when working in this kind of project, these would be my advices :

- Research and UX metholodgies do work, save time, money and frustration

- Talk to the end user and involve them

- Some user evaluation methods are less applicable than others are for a culturally diverse user base

- Keep your design process transparent

- Prototyping early will drastically reduce the number of future costly dev iterations

- Document EVERYTHING

- Define a clear approach to localization (eg: localized UI with content in English)

- Make good use of design system

- Hiring a translator for localization won't do the job

- Over-communicate with distributed teams

- Choose UX metrics carefully and stick to them

- You need to put a bit of yourself into that kind of project, so if you don´t care (which is fine), it’s better not to do it.